Migrating database from ASP.NET Identity to ASP.NET Core Identity

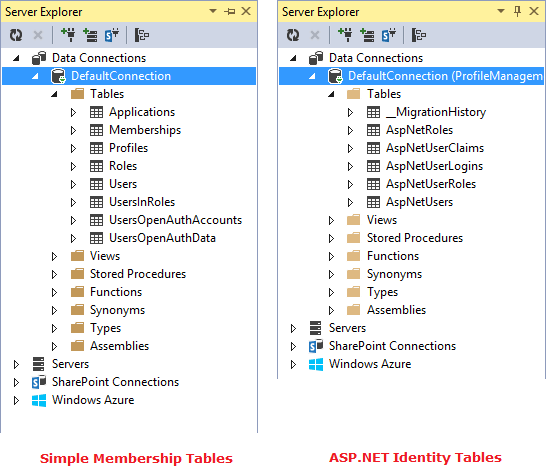

As you know ASP.NET Core Identity (table structure) is different from what we had earlier in ASP.NET Identity. Actually the identity system which we have today with .NET Core is very mature and continuously evolved be it ASP.NET Membership, ASP.NET Identity 1, ASP.NET Identity 2 and now ASP.NET Core Identity. Recently I had to migrate few application to ASP.NET Core and similar its identity database. Because the table schema is changed, i had to re-think and create migration script which I would like to share with you today. It is very simple and easy, just three step and I had everything ready: STEP 1 : Change name of existing tables STEP 2 : Create ASP.NET Core Identity tables STEP 3 : Migrate data from old tables (ASP.NET Identity) to new tables (ASP.NET Core Identity) Script: https://gist.github.com/itorian/c699e8534b392a6c726ec66c48100072 You should also watch my video, where I demoed migration.